If you own onboarding, lifecycle messaging, product analytics, or growth for a new app, this is the issue to watch: a strong launch dashboard can make the product look healthy while future retention is already weakening.

A launch can create a false sense of success. Installs spike. Sessions rise. Internal channels get louder. People start sharing dashboard screenshots because the numbers finally move in the right direction. For a few days, sometimes a few weeks, the product looks like it has traction.

That is exactly when teams can misread the situation. The example in this article comes from a specific mobile app launch: Faraday Future’s FF Intelligent App, launched during a high-profile company period around its public market debut and electric vehicle reservation push. The app was designed to give prospective customers, vehicle enthusiasts, and brand followers a direct product experience through content, community-style engagement, and reservation-related touchpoints.

The launch had attention. It had campaign traffic. It had leadership visibility. What the team did not yet have was habit.

The question was simple: which users had found real value, and which users were already starting to slip away? At the start of that launch, the team looked at standard metrics: installs, sessions, opens, and click-through rate. Those numbers were useful for measuring launch activity. They were weak at measuring retention risk. They showed who arrived. They did not show who would stay.

That is where the work had to shift from launch reporting to product diagnosis.

The first mistake: measuring attention instead of value

This is the most common post-launch mistake I see.

Attention is easy to count. Product value is harder to evaluate. A first open does not prove intent. A push click does not prove interest. Even a second session can be misleading if the user still has not completed the action that makes the app useful.

Teams should answer one question early: what is the definition of meaningful early action for this product?

For one app, that might be completing onboarding and using one core feature. For another, it might be saving content, following a topic, joining a workspace, completing setup, or returning without a campaign reminder. The action changes by product. The discipline does not.

Many teams skip this step. They move straight to the dashboard, add more events, and argue about which chart belongs in the weekly review. Weeks later, they still cannot explain churn in a way that leads to action.

A better approach is to identify the small set of first actions that indicate value, then measure whether new users actually complete them.

Before you predict churn, define it

“Churn” sounds precise. In practice, it usually is not.

For a subscription product, churn might mean cancellation. For a marketplace product, it might mean a decline in transaction frequency. For a mobile app, churn often sits somewhere between inactivity, low engagement, and incomplete onboarding.

For that reason, teams should define churn behaviorally before modeling it.

In the FF Intelligent App launch, “did not open the app yesterday” was too severe. That would have flagged too many users too early. “Has not used the app in months” was too late. By then, the team would have lost the chance to intervene.

The more useful definition combined two things:

• A short inactivity window that suggested fading interest

• Failure to complete a small number of early actions that usually showed the user had reached value

This changed the discussion. Instead of treating churn as a vague retention number, the team could connect it to missed value moments.

The questions became more useful:

• Which early action separates curiosity from genuine interest?

• How soon do those signals appear?

• Which missed action deserves a product or lifecycle response?

That is when the model had a useful job.

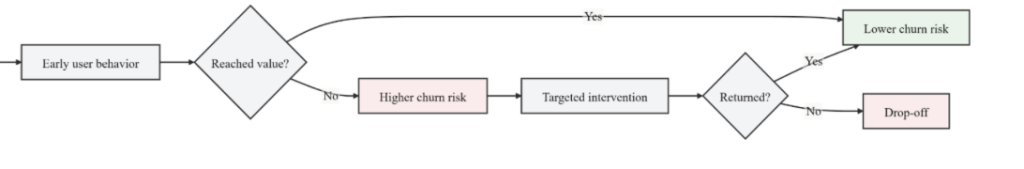

Figure 1. A simple retention loop: track early user behavior, identify users who have not crossed the value threshold, and trigger the next best action before they drop off.

The useful signals were ordinary

The strongest predictors were not surprising. They were behavior signals.

That matters because teams often treat a churn model as a black box. But the hardest part is usually not choosing the algorithm. The harder part is choosing signals that represent user value and can influence a decision.

In this launch, the useful early signals included:

• whether the user completed onboarding

• whether the user returned for a second session

• whether the user completed the first core action

• how deep the first session went

• whether the user responded to a lifecycle touchpoint

• whether the user came back organically, rather than only after a campaign nudge

User characteristics helped at the edges. Behavior did most of the work. That distinction matters. Demographic cuts can make a slide deck look polished without giving the team anything useful to do. A plain behavior flag can look less impressive but tell the team exactly what to fix next week.

The same rule applies to tooling. Teams do not need a perfect stack to start. An event-driven analytics setup or clean warehouse events are enough if they support three basics: cohorting, retention cuts, and a shared event dictionary.

If the event data is messy, the model will only formalize the mess.

The model mattered less than the workflow around it

A churn score by itself is easy to ignore. It gets attention for a week, then disappears into a dashboard.

It becomes useful only when it changes what teams do. In this project, the shift was not just building a churn prediction model. The bigger shift was treating the score as an input into product and lifecycle decisions.

Different risk patterns led to different actions:

• Users who started onboarding but did not complete it needed a shorter path back in, not a generic reminder.

• Users who browsed but did not complete the first core action needed one clear prompt pointing them to that action.

• Users who showed early intent but later faded needed an email or push message tied to the content or feature they first engaged with.

• Users who returned only after campaign prompts needed product-side work, because borrowed traffic is not retention.

That is a better operating model than sending the same follow-up message to every user.

This is also where product-led thinking becomes practical. The question is no longer, “How do we get them back?” The better question is, “What part of the experience failed to earn the next visit?”

That second question leads to better backlog decisions.

What changed once the team acted on the signals

The targeted campaigns lifted active users by 35%.

That result mattered. But the bigger change was how the team discussed retention. Before this initiative, launch performance and user health were tightly linked in the team’s thinking. Afterward, they became easier to separate. A successful launch was no longer treated as proof of product strength. It became a short learning window.

Ownership became clearer too. Before the churn work, retention felt like a broad metric owned by “the business.” Once churn was mapped to missed early actions, ownership became easier to assign. Some problems sat in onboarding. Some sat in lifecycle messaging. Some sat in the product’s ability to expose value quickly.

That made prioritization easier. It also reduced some of the politics in team discussions. Teams tend to do better work when they are debating user behavior rather than defending their function.

Four lessons product teams can use right away

1. Define value before you define churn

Do not start with the model. Start with the moment that tells you a user has received something useful from the product.

A simple formula works well: A new user has reached value when they complete X within Y time. For the FF Intelligent App launch, the value definition had to be tied to early product engagement, not just app opens. The team needed to understand whether a user had moved beyond curiosity into actual use.

Two practical tips:

• Pick one primary value event and one supporting return event. For example: “completed onboarding” plus “returned for a second session within seven days.”

• Avoid vague metrics like “was active.” Use a behavior that a PM could point to on a journey map.

2. Track fewer signals, but make them actionable

Most teams track too much and understand too little.

For a first pass, start with a short event list:

1. First open

2. Account creation or sign-in

3. Onboarding completion

4. First core action

5. Second session or return within a defined period

6. Response to a push, email, or in-app prompt

Add only a few properties at the start, such as acquisition source, platform, app version, and market. Two practical tips:

• Use a one-page tracking plan. It should include the event name, definition, owner, and reason the event matters.

• If a metric does not change a decision made by the PM, designer, or lifecycle owner, remove it from the main review.

3. Treat the model as a decision tool, not a trophy

The model should earn its place in the operating rhythm.

Every risk segment should map to an action, an owner, and a review date. If the score does not change messaging, onboarding, experiments, or prioritization within two weeks, it is reporting, not action. Two practical tips:

● Build segments around actions the team can take: unfinished onboarding, no first core action, shallow engagement, or campaign-only return.

● Review false positives. If many “high-risk” users were going to return anyway, the model may be measuring noise rather than product friction.

4. Review churn signals weekly, not quarterly

Quarterly churn reviews are too slow for new-user problems. By that point, the product may have already trained users into the wrong habit.

Early churn signals should be reviewed weekly. The review does not need to be long. If the dashboard is clean, thirty minutes is enough. Two practical tips:

● In the weekly review, focus on three things: new-user cohorts, drop-off between onboarding and first value, and the size of the largest at-risk segment.

● End every review with one action: one experiment to launch, one lifecycle message to revise, or one onboarding fix to ship.

Early churn is not just a growth or finance KPI. It is one of the strongest product signals a team will get. It means the user engaged enough with the product to form an opinion, and that opinion was not strong enough to create habit.

That is hard news. It is also useful news.

Launch week can buy attention. It cannot buy a second meaningful session. It cannot buy routine use. It cannot buy trust. Those have to be earned in the product. That is why teams should treat early churn as a map. Not a perfect map. Sometimes a rough one. But an honest one. It shows where the product presents value quickly and where it asks too much from the user.

For most teams, more launch noise is not the answer. What they need is a crisp definition of value, a smaller set of signals, and a weekly discipline of turning behavior into product action.

Bio:

Meihui Chen is a senior data scientist focused on retention, identity, and growth analytics, with work that improved activation, targeting, and active-user growth.